Is Your Teen Using AI for Mental Health Advice?

- Gurprit Ganda

- Mar 20

- 4 min read

The Conversation Your Teen Is Having — But Not With You

When your teenager feels sad, anxious, or overwhelmed, who do they turn to? Increasingly, the answer is not a friend, a parent, or a counsellor. It is an AI chatbot.

A landmark 2025 study published in JAMA Network Open found that 13.1% of American adolescents and young adults — approximately 5.4 million individuals — use generative AI chatbots like ChatGPT for mental health advice when feeling sad, angry, or nervous (McBain et al., 2025). Among 18 to 21-year-olds, the figure rises to 22.2%. Of those who use chatbots, 65.5% engage at least monthly, and 92.7% reported finding the advice helpful.

In Australia, with our under-16 social media ban driving young people toward alternative digital platforms, understanding how teens interact with AI for emotional support is more urgent than ever. This guide is for parents in the Hills District and beyond who want to understand what is happening, what the risks are, and when professional help is needed.

Why Teens Are Turning to AI for Emotional Support

The reasons are straightforward: AI chatbots are free, available 24/7, completely private, non-judgmental, and respond instantly. For a teenager who is anxious about asking for help, who cannot afford therapy, or who does not want to worry their parents, a chatbot feels like a safe first step.

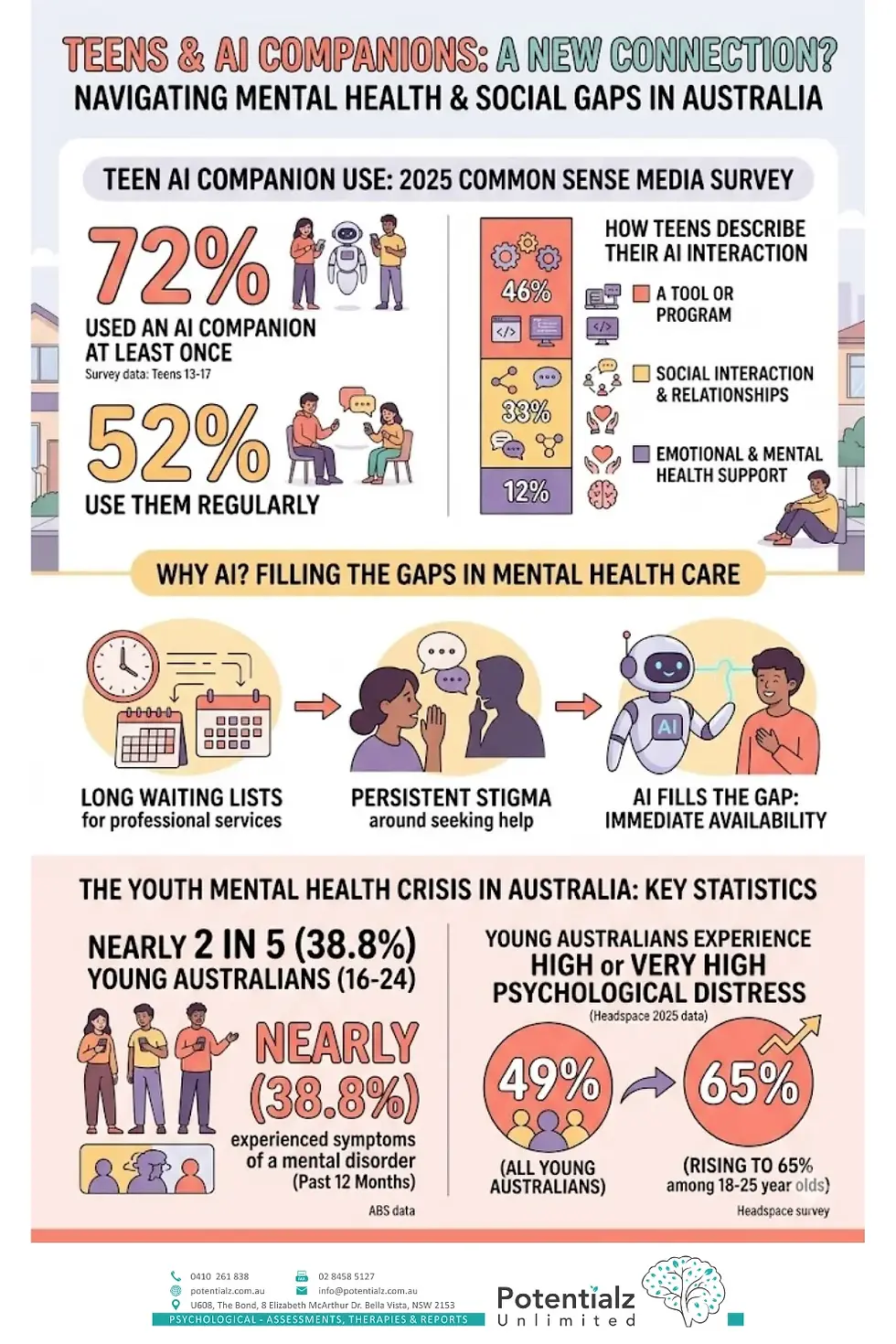

According to a 2025 Common Sense Media survey, 72% of teens have used an AI companion at least once and 52% use them regularly. While 46% described the AI as "a tool or program," 33% used it for social interaction and relationships, and 12% specifically for emotional or mental health support.

The appeal is amplified by the youth mental health crisis. The most recent Australian Bureau of Statistics data shows that nearly two in five (38.8%) young Australians aged 16-24 have experienced symptoms of a mental disorder in the past 12 months. A 2025 headspace survey found that 49% of young Australians experience high or very high psychological distress, rising to 65% among 18-25 year olds. When professional services have long waiting lists and stigma persists, AI fills the gap.

The Real Risks of AI Chatbots for Vulnerable Teens

While AI chatbots can provide general coping suggestions, they carry significant risks for vulnerable young people. A December 2025 article in JAACAP Connect documented the case of a 14-year-old boy with autism who developed an intense emotional attachment to a Character.AI chatbot. The boy engaged in age-inappropriate conversations with the AI, withdrew from family and friends, and tragically died by suicide (Ng, 2025). This case underscored the dangers of AI companion apps that are designed to maximise engagement without safeguards for vulnerable users.

The key risks include: misinformation, as AI chatbots can provide inaccurate or harmful mental health advice since they are not trained clinicians and cannot assess risk; emotional dependency, where vulnerable teens may develop unhealthy attachments to AI "companions" replacing real human connection; privacy concerns, because conversations with AI are stored and may be used for training data with teens potentially sharing sensitive information without understanding implications; false sense of treatment, where using a chatbot may delay teens from seeking the professional help they actually need; and inappropriate content, as some AI platforms have failed to implement adequate safety filters for underage users.

What AI Can and Cannot Do for Mental Health

It is important for parents to understand the difference between what AI chatbots can and cannot provide. AI can offer general wellness information and coping suggestions, provide a space for teens to "think out loud" by writing about their feelings, suggest relaxation techniques like breathing exercises, and direct users toward professional resources.

AI cannot diagnose mental health conditions, assess suicide or self-harm risk, provide personalised therapy based on clinical assessment, understand context, body language, or emotional nuance, build a genuine therapeutic relationship, or provide crisis intervention. As the RAND researchers noted, there are "few standardised benchmarks for evaluating mental health advice offered by AI chatbots, and there is limited transparency about the datasets used to train these models" (McBain et al., 2025).

How to Talk to Your Teen About AI and Mental Health

Rather than banning AI use outright (which can drive it underground), have open, non-judgmental conversations. Ask your teen whether they have ever asked an AI chatbot for advice about their feelings. Listen without reacting. Let them know you understand why AI might feel easier than talking to a person. Explain the limitations of AI — that it cannot truly understand their situation, assess risk, or provide the nuanced care a psychologist can. Set boundaries together about which platforms are appropriate and discuss privacy implications. Make professional help accessible and normalised — frame it as a strength, not a weakness.

When Your Teen Needs a Real Psychologist, Not a Chatbot

Seek professional help if your teen is withdrawing from family and friends in favour of AI interactions; expressing thoughts of self-harm or hopelessness (to you or to AI); using AI chatbots as their primary source of emotional support; showing signs of anxiety, depression, or behavioural changes lasting more than two weeks; or if you are concerned about the content of their AI conversations.

At Potentialz Unlimited in Bella Vista, our child psychologist provides confidential, evidence-based therapy for adolescents. We offer a safe space where your teen can talk to a real person who is trained to listen, assess, and help. Book a consultation by calling 0410 261 838 or visiting live.potentialz.com.au.

Knowledge Check Quiz

References

McBain, R. K., Bozick, R., Diliberti, M., et al. (2025). Use of Generative AI for Mental Health Advice Among US Adolescents and Young Adults. JAMA Network Open, 8(11), e2542281. https://doi.org/10.1001/jamanetworkopen.2025.42281

Ng, S. (2025). Navigating Adolescent Mental Health in the Age of Artificial Intelligence. JAACAP Connect, 13(1), 13–16. https://doi.org/10.62414/001c.150329

Headspace. (2025). Nearly half of young Australians experiencing high or very high levels of psychological distress. Headspace National Youth Mental Health Foundation.

Australian Bureau of Statistics. (2024). National study of Mental Health and Wellbeing. ABS.

Common Sense Media. (2025). Teens and AI Companions Survey. Common Sense Media.

Comments